13 December 2023

Next.js A/B testing with Unleash. What I’ve learned from conducting tests for a newly modernized application

Yes, this article includes the actual A/B testing process complete with code. But more importantly – I will show you how quickly software developers can use tools such as Unleash. If they’re closely involved in the A/B testing from the beginning, there will be no wasted time on useless back-and-forth conversations between development and marketing. Organize and optimize!

Here’s a little confession. I’ve been working as a frontend developer for years and yet until recently, I haven’t run any A/B tests! 🙊

Some people may shrug here – “So what, A/B testing is for marketers, doing little content experiments using Google Optimize (or whatever WYSIWYG tool)”.

Well, as it turns out, not exactly.

The much underestimated A/B testing provides solid data for business

During this project, I realized that not only is A/B testing powerful, but there’s also an excellent practical reason for developers to be deeply involved in running them.

My A/B testing philosophy:

- it’s a powerful and underestimated way of ensuring you provide users with what they want.

- it’s data-driven, and all business decisions should ideally be based on solid data.

- it doesn’t take up much time and saves even more in the future.

The problems

Together with my teammates, I’ve been working on a big e-commerce website. Long story short – we’ve completely rebuilt an existing system (incl. frontend, backend, mobile app, infrastructure, and more) that performed well but was not so scalable. We have introduced a new layout, and a few new features but the core user journey was the same – allow sellers to offer their products and help buyers to buy them.

For the rebuilt version, we aimed at getting at least the same conversion rate and revenue at launch. We planned on increasing that later on by introducing new exciting features to the users that were not available in the old system. Unfortunately, it was not that easy…

Before we could even begin to think of new features, we needed to solve problems that arose right at the launch of the new redesign.

#1 Problem – conversion funnel problems

Analytics tools showed us that we had some conversion funnel problems in comparison to the old system. We weren’t sure what the root cause of it was. The new layout is too complex, undiscovered bugs, confusing copy, or the shape or color of call-to-action buttons – just about anything could be to blame.

In addition to that, the bounce rate increased. This metric calculates how often someone arrives on our site, views one page, and then leaves. Typically, the higher the bounce rate, the less useful the page seems to the user.

We suspected that the bounce rate surge may have the same root cause as the funnel problem. However, the biggest problem of all was that we were fumbling in the dark…

#2 Problem – improvements that can make things worse

Upon closer examination, we were able to create a list of suggested improvements to the user interface – either the design or copy. We decided to go through it one by one. Among other things, we:

- spotted and fixed a couple of bugs in core user journeys (e.g. iPhone users couldn’t see the “Filter” button at the bottom of the page because it was covered by advertising),

- extended the core flow by adding a couple of new features (e.g. we added sorting at the very top of the mobile view, and a recommendation module that suggests products based on the user’s previous searches),

- put more visual focus on key messages and CTAs.

We thought the improvement process went well, but our subjective thoughts were not reflected in the cold reality of data and metrics. Actually, in some cases, the metrics got worse!

Read more on Next.js:

AB testing

It took us a while but we finally figured out that we cannot just believe and trust in our assumptions, no matter how rational they seem. Every change, even the smallest one, can have a big impact on the experience and behavior of a user. The tiniest detail could potentially leave him confused and frustrated. While our intuition may have sufficed in the past project, this time we needed to be more careful while introducing new features and improvements.

Someone brought out the idea of implementing A/B experiments. Out of options, few complained. Thanks to them, we can roll out new features or improvements to a smaller group of users and, due to that, decrease the risk of potential failure and any resulting consequences. If the experiment succeeds, we can roll it out to all users and improve our performance. If the experiment fails, we can abandon the idea without any consequences.

We all agreed about doing the tests, but we still had to go through a lot of discussions. Since it was the first time we were introducing tests into such a system, we needed to talk through the whole implementation. Eventually, we noted down all of our doubts as questions:

- Does the solution manage feature toggles at different layers (frontend, backend) and in different frameworks (Nextjs, ReactNative, Node.js)?

- Does the solution have a test environment (to turn on all the feature toggles without affecting production) and a production environment?

- Are there limits to the number of people who can manage feature toggles?

- Are there limits to the number of feature toggles?

- Can we add our configurations and fields (e.g. because of different markets, feature toggle X needs to be available on marketplace A, but not marketplace B)?

Then, we answered them based on our research and a couple of spikes done by engineers.

We ended up with two key decisions:

- We chose Unleash as our features management tool as it fits our needs from both the business and technical side. The platform supports all the key technologies of the project, namely Next.js, a React Native mobile app, and a couple of backend services written in Node.js. We wanted to have an option to introduce feature toggles everywhere so that we can enable and disable new features quickly. Unlike other options, Unleash supports all of those so it was a natural choice.

- We decided to use the Fullstory platform that we had in place before to aggregate all metrics that can lead us to valuable conclusions.

Before we jump into the actual experiments we made, let’s get the theory about A/B testing, Next.js, and Unleash out of the way. If you are already familiar with it, feel free to skip it and jump right into the integration part 🙂

The theory – A/B testing, Next.js, and Unleash make for a powerful combo

What’s A/B testing?

A/B testing is a methodology for comparing two or more variants of the website against each other to determine which variant performs better.

In an A/B test, you take a page and modify it to create a second version of the same page. This change can be as simple as a single button or a complete redesign of the page. Then, part of your traffic is shown the original version of the page (known as control or A) and half is shown the modified version of the page (the variation or B).

Technologies used

Next.js

Next.js is a JavaScript framework that provides an out-of-the-box solution for server-side rendering of React components. With Next.js, engineers can render their React code on the server and send simple HTML to the user. It supports static site generation when the page HTML is generated at build time. Thanks to that HTML can be cached and reused on each request. The framework offers a range of benefits like improved SEO and enhanced performance.

Unleash

Unleash is a fast-growing open-source feature management tool. It delivers a complete feature flag solution built with developer experience in mind and it’s great for enabling A/B experiments. Unleash lets teams ship code to production in smaller releases whenever they want and can be used with any language and any framework. You can check how it works by playing with Unleash’s live demo. In our article, we will focus on A/B experiments, but Unleash offers much more than that.

Next.js and Unleash integration

At the beginning of the story, I assume that we already have Next.js in place so we will focus only on Unleash and its integration with the existing app.🙂

1. First, we have to install the Unleash SDK in our app.

We’re using the Node.js SDK because we’re doing the entire integration with Unleash on the server side.

`npm install unleash-client`

2. Once SDK is installed, we can create a simple Unleash client.

UnleashClient is a singleton that initializes the Unleash SDK and starts the synchronization process. Why use a singleton? To avoid multiple instances of initialized SDK. Unleash uses polling synchronization to fetch the toggles and experiments every few seconds. With a singleton, we are sure that we will have only one synchronization process in the background.

3. Now, we can integrate our UnleashClient with Next.js by initializing the client in the getServerSideProps method of the page where we would like to run the experiment.

As you can see, the implementation is straightforward. If it is the first call for the UnleashClient instance, Unleash will be initialized and synchronization will start. if the next call takes place, then we will reuse the instance that was created before.

Now, we have to return our experiment variants in page props by calling the getExperimentVariant method with an experiment name and context.

4. Now we can focus on the React side so that we can read experiments in components.

Let’s create ExperimentsContext

Context is very simple. It keeps an object of experiments that comes from page props (returned by getServerSideProps). Thanks to the context we can avoid props drilling and we will have access to experiments in every single component. Of course, remember to wrap your Page component with that context to make it work.

5. Last but not least – adding the useExperiment hook to use in the components.

Hook has two responsibilities. First, it has to calculate if the experiment is enabled based on the variant we got from Unleash. Then, the hook has to track the experiment assignment to measure the experiment’s impact on the user.

In our example, we push the event to Google Tag Manager, but you can replace it with anything else (e.g. Google Analytics, FullStory, or even your own service).

First experiment

Now that everything is in place, we can run the very first experiment in the application.

Requirements

The first thing to do is to define a goal. We have a yellow call-to-action button that may or may not be the cause of the high abandonment rate. Let’s assume that the experiment will test the call-to-action button color. We hypothesize that the green button will perform better than the yellow one and consequently, the bounce rate will decrease.

Now, we need to define what chunk of the total traffic should be diverted to the new version. Let’s say, we would like to run the experiment for 50% of the incoming mobile audience traffic only and limit it to mobile devices as well.

So half of the mobile users will see the alternative view.

Additionally, we want to make the experiment sticky. It means that if a user sees the experiment version, they should continue to see this version each time they visit the app – at least until the whole test is called off.

Unleash experiment setup

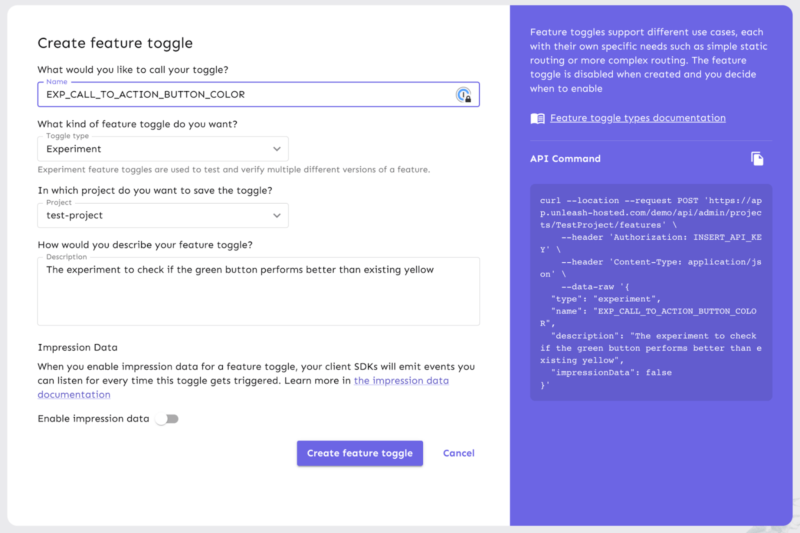

Create feature toggle

Let’s create a feature toggle in Unleash. A few considerations before we delve into this:

- Unleash has many types of feature toggles, so it’s a good idea to add a prefix to a feature toggle name just to indicate what’s behind it. In our case, it’s EXP_

- Choose the correct toggle type, that is experiment.

- I recommend adding a short description for the toggle just for reference and documentation.

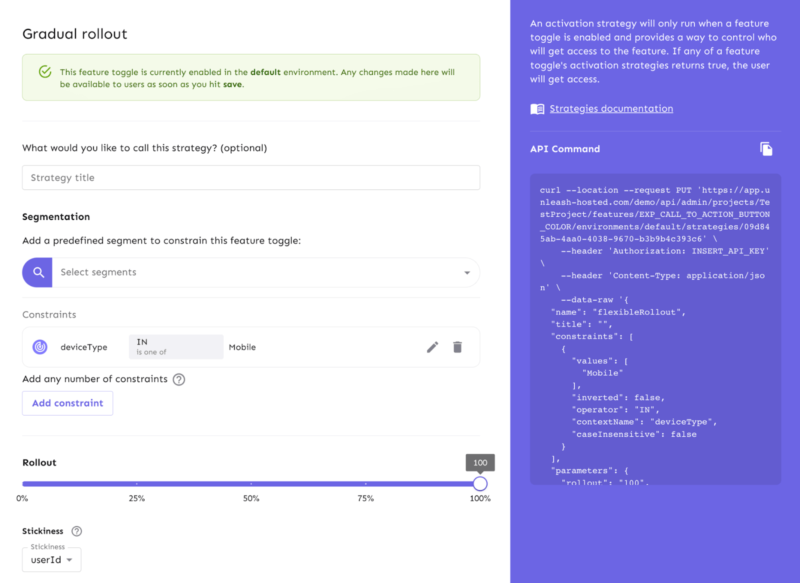

Set activation strategy

Since the experiment is to use 50% of the mobile traffic, we should go for the gradual rollout strategy. This means that the Unleash engine itself will pick out 50% of the users to be part of the experiment.

Additionally, it will limit the traffic to mobile devices only, so we have to add a constraint value based on the deviceType context field.

In the end, we set the stickiness to userIdto meet the requirements.

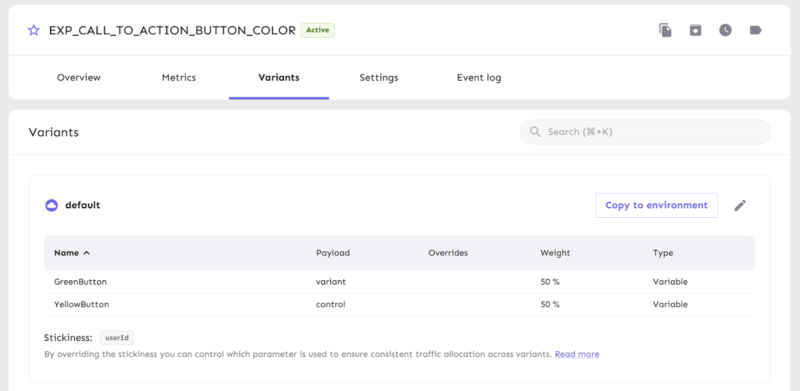

Define experiment variants

Now we need to create the experiment variants. We have created two:

- “YellowButton” is the control variant (the current one).

- “GreenButton” is the alternative we want to test.

It is worth mentioning that both variants will have the same weight (they will receive the same share of traffic directed to the test).

Experiment implementation

First, we have to assign the experiment to the page-visiting user:

We have to adjust the getServerSideProps implementation. To that end, we are passing the proper experiment name and real context values to the getExperimentVariant method. In this context, we have to pass all parameters required by the experiment configuration in Unleash (e.g. deviceType, userId).

Now, we can implement the UI change:

As you can see, the component is straightforward. We call the useExperiment hook with our experiment name and then we just set a different background color on the HTML button element.

And that’s it 🙂

Running the experiment when we already have the Unleash tool in place is very straightforward. Just follow the process:

- Define the experiment goal before the implementation.

- Configure the experiment based on the requirements set in Unleash.

- Implement the experiment assignment and the same variant in the code.

- Define metrics in your tracking tool to measure user behavior (this point is not included in the technical implementation, that’s a task for a business-related team later on).

Smart teams save your money

Benefits galore

So what did we learn from all this?

AB testing is an easy way to make sure you don’t go in the wrong direction. It is part of a data-driven approach to business and development.

When you rebuild your site and you want it to perform better than the previous one, make sure you take measurements of the current performance in terms of bounce rate, time on page, conversions, etc.

When working on a new platform, integrate it with a tool that allows for A/B testing. For custom software development, the best tool is a feature management tool such as Unleash, because it’s powerful in the hands of a developer who can write custom code.

Eventually, we saved a lot of time by quitting the trial-and-error approach and going for a data-driven one. But think how much more we would have saved, should we do this from the start!

So if you work on a new platform or consider redesigning a new one, ask yourself this question – do I know exactly what my customers want and how they want it to be delivered? If you have doubts, A/B testing may be just the solution you need to get rid of them.

Our Next.js crew waits for a new challenge. Is it your project?

It’s better to ask the way than to go astray. The first 1-hour consultation with our specialists costs you NOTHING. Just tell us what your problem is, and we’ll get back to you ASAP.