05 June 2023

How to make a high-traffic application resilent? A high traffic app optimization case study

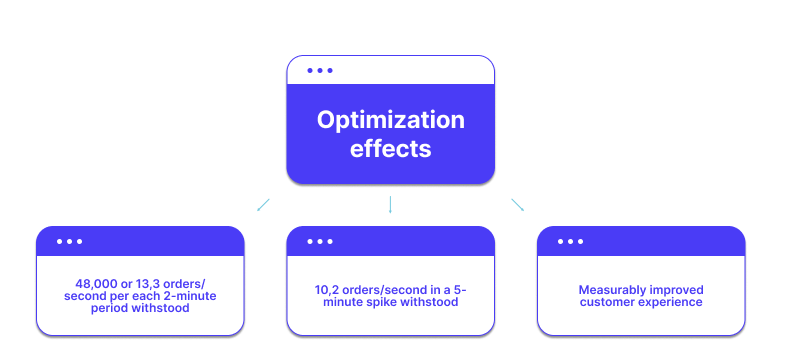

The app of one of our clients struggled with high traffic, crashing frequently. But once we optimized it, it could withstand the highest spikes, even when the order rate per second reached 13,3! There are many things you can do to achieve that. Most importantly, you have to view this as part of a bigger process of analyzing the state of your app. To help you get a clear picture of how an optimization like this looks like, we’re going to tell you exactly how we helped that client achieve such results.

Are you struggling with the optimization of your high-traffic web applications, continuously failing to deliver what users expect? Do you experience app performance issues? Does it still crash under traffic load despite your best efforts? We’ve recently completed the optimization of a high-traffic app and we have achieved a total success with some interesting numbers to be shown. Read more to find out all about it. You’re going to learn:

- how the app looked before the optimization effort started and how we measured its performance (stress testing!),

- the strategic thought process behind a complex high-traffic app optimization,

- a step-by-step high-traffic app optimization guide, complete with code examples,

- some thoughts on non-relational databases and their role in such projects,

- the tools we used to test and analyze the performance of the app – both before and after our optimization work. With that you will know exactly how to ensure customer satisfaction.

Let’s get right to it!

High traffic web app optimization – case study overview

If your app has severe performance issues and you start wondering if you’ve chosen the right technology stack – you’re not approaching high traffic website maintenance the right way. It’s not really important that it’s a Node.js app, a PHP app or a Java/Swift app for a mobile device. Optimizing high traffic websites or apps comes down to the quality of the code and processes that guide the ongoing development. Many high traffic websites have the same symptoms, but caused by completely different cross-platform issues.

To tackle high traffic site performance issues holistically and achieve consistent site performance, you need to begin with analyzing the codebase, development process and business context. Then, you improve the process.

The Software House developers have recently been working on a suite of high-traffic apps with the goal of optimizing their performance. SPOILER alert – it went well. 🙂 But what exactly did we do leading up to this outcome? Let’s find out.

The “Before” – what we got to work with

At its core, the product that we’re talking about makes it possible for end users to order and pay for food from a restaurant and pick it up on the go. It consists of a web app for restaurants and a mobile app for individual consumers. Mobile devices and mobile users do have their own concerns, but most of the challenges we talk about here are similar. Let’s focus on the web app then.

The web app has various admin panels for different classes of users – workers, restaurant managers and superadmins that run the whole system and add new places.

The app was once part of a bigger system, before it was separated. This, among other things, resulted in a number of performance challenges we’re going to bring up later.

The Software House’s developers participated in the development of new views for admin panels and rewriting the web app in React as a single-page application (SPA) combined with REST API. In order to handle complex business logic, our developers decided it would be based on the CQRS patterns. It makes it easier to maintain the architecture in the future.

But the real problem was the performance.

High-traffic app optimization – goals and challenges

Chaotic codebase

As we have said before, the app was once separated from a bigger system. As a result, more than half of its codebase was virtually redundant and not necessary for it to function. Many changes to the development teams and rapid growth resulted in the codebase getting a bit out of hand.

To put in the simplest words, nobody exactly knew how it worked. Therefore, our first challenge was to thoroughly analyze the codebase and understand its intricacies.

Actual optimization and refactoring

The poor quality of the original codebase made it initially difficult to make sense of it all. However, once we understood the code, we could refactor it – remove the needless parts, get rid of redundant requests, combine some other requests into a single one and more. Remember the advice from the beginning? Before improving the code, you must understand the business context first.

Testing

As it is often the case with what’s essentially a shopping app, the traffic is not just high – it also has a tendency to drastically change over time. Luckily, it’s usually easy to predict the specific hours and days in a year when it happens.

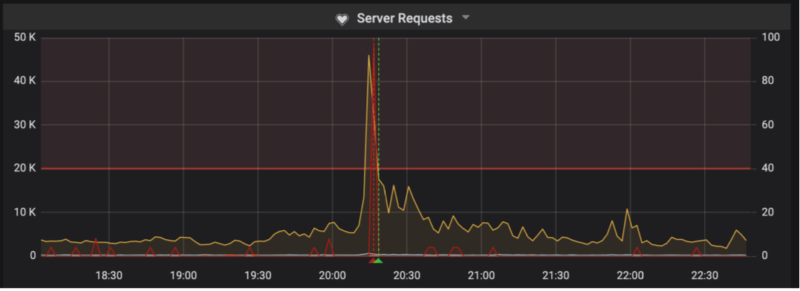

By doing stress testing, we can predict just how much traffic an app can handle and design a dedicated server scaling strategy to respond to predictable spikes (sharp increase in the number of users). It also makes it easy to see if the optimization process bore fruit and prepare charts that are easy-to-understand for business people.

It is thanks to stress testing that we will be able to show you in great detail, by the end of the article, just how much of a difference our optimization efforts made.

Future-proofing the app

Besides that, the remaining work consisted of bug fixing and adding some new features, which was challenging to the fact it often had to be done simultaneously with the process of getting the app back on track. To make sure these problems won’t come back, we aimed for three goals simultaneously:

- Architecture efficiency – thorough optimization of the whole codebase to improve its performance.

- Faster development process – easy-to-read and free of all redundancies in order to improve development processes in the future. One of the reasons we wrote so many tests is also to fix the problem of difficult learning curve for new devs to get into the app.

- Better communication – easier communication with the customer center to shorten bug reporting and fixing, easy-to-understand metrics that simplify locating sources of problems,

That way, we could best combine development and business goals in order to turn the whole system into an efficient machine that is ready to work and grow around the clock.

The nitty gritty of high-traffic app optimization

Our optimization work involved many considerations. Some of the most important aspects included:

- Reducing the number of database queries – the fewer, the better.

- Rewriting the largest and most costly queries so that they are faster and simpler, reducing the amount of data that needs to be loaded.

- Moving some of the data to Redis (more on that later).

- Adding an efficient caching mechanism for data that was previously loaded many times.

- Getting rid of unnecessary and unused code.

- Low-level refactoring (e.g. reducing the number of loops).

- Adding indexes to database columns – by doing that we could filter out the indexes faster for many queries that are performed often. Easy way to speed up querying.

- Removing unnecessary column indexes.

There are a couple of issues here that we should take a closer look at.

Are you struggling with the optimization of microservices-based applications?

Microservices offer a lot of benefits in terms of scalability and performance, but they also add a lot of complexity to your infrastructure. Learn more about the latest software architecture trends from our State of Microservices report.

💡 Read more: Benefits of Node.js web development and the companies that use it

The case for using a non-relational database

Redis is an open source in-memory data store, which can be used as either a database, or a caching mechanism. As part of our optimization efforts, we decided to get rid of some queries that are made over and over again, and use Redis instead to store the data in cache memory. Redis supports all kinds of data structures, which makes it very flexible for such operations.

In addition to that, we also used Redis successfully to store various temporary data (e.g. single-use tokens), including session data.

Test and analyze

To get the clearest possible view of the app’s original stare, we employed various tools.

A perfect tool for getting a bird’s eye view of the app. In particular, you can learn what kind of scripts/libraries are used and how much, find specific areas in need of code optimization and get useful alerts for all kinds of traffic-related issues.

Stress testing made easy. With Artillery, we could measure exactly how much traffic our app can handle as well as the performance of the app before and after the optimization. As you optimize your app in an iterative way, Artillery provides a great way to quickly assess your progress or prove it to your stakeholders.

The Software House’s own open-source framework for writing E2E testing scenarios. The app’s original test coverage was quite poor and automated testing allowed us to quickly turn it all around

To further improve the testing and improve the overall stability of the app, we implemented functional tests with Behat. A big advantage of this solution is that it is very easy to get into and start writing new test cases

- Other tools

The software above are just some highlights of the many tools we employed during the process. The list includes (but is not limited to) Splunk, Kibana, Grafana, ElasticSearch, Helm, Vault or Jenkins.

The “After” – what we did and what we have learned

As we have said before, the optimization process was an outstanding success.

Its true test was the day of some of the highest number of orders, which took place during a week of national-wide charity activities. Let’s take a look at the facts.

- Back in 2018, the app crashed during the spikes in traffic. In addition to that, on the very same day in 2019, the traffic increased by an additional 20 percent. Despite that, the refurbished app worked smoothly all day.

- On the chart below, you can see the number of users per each 2-minute period during the day. During the highest spike, it reached around 48,000, with roughly 13,3 orders per second.

- During the highest 5-minute spike, the order rate per second was between 10-12.

The system responded well to these sudden spikes, appropriately increasing and decreasing the use of instances and jobs required to handle the volume.

How do you go from the initial state to such results? As we have confirmed during the project, it’s important to:

- Look at the processes holistically – not as a collection of bugs or problems but as a development process that went wrong and needs permanent reforms.

- Look for both big and easy optimization opportunities. The app may have some very simple shortcomings. Don’t assume that even the simplest things are definitely done right. There may be many easy optimizations wins to score.

- Measure the improvements before and after using specialized DevOps/QA analytics tools.

Feel like you can do it too now? Remember – practice makes perfect so make your application resistant to traffic spikes and fully ready for commercial success!

We hope that this article will make it easier for you to understand what exactly is needed to truly optimize a high-traffic app. 🚀

If you still have doubts, or if you would like TSH developers to help optimize your application, do not hesitate to contact with us. ☎️