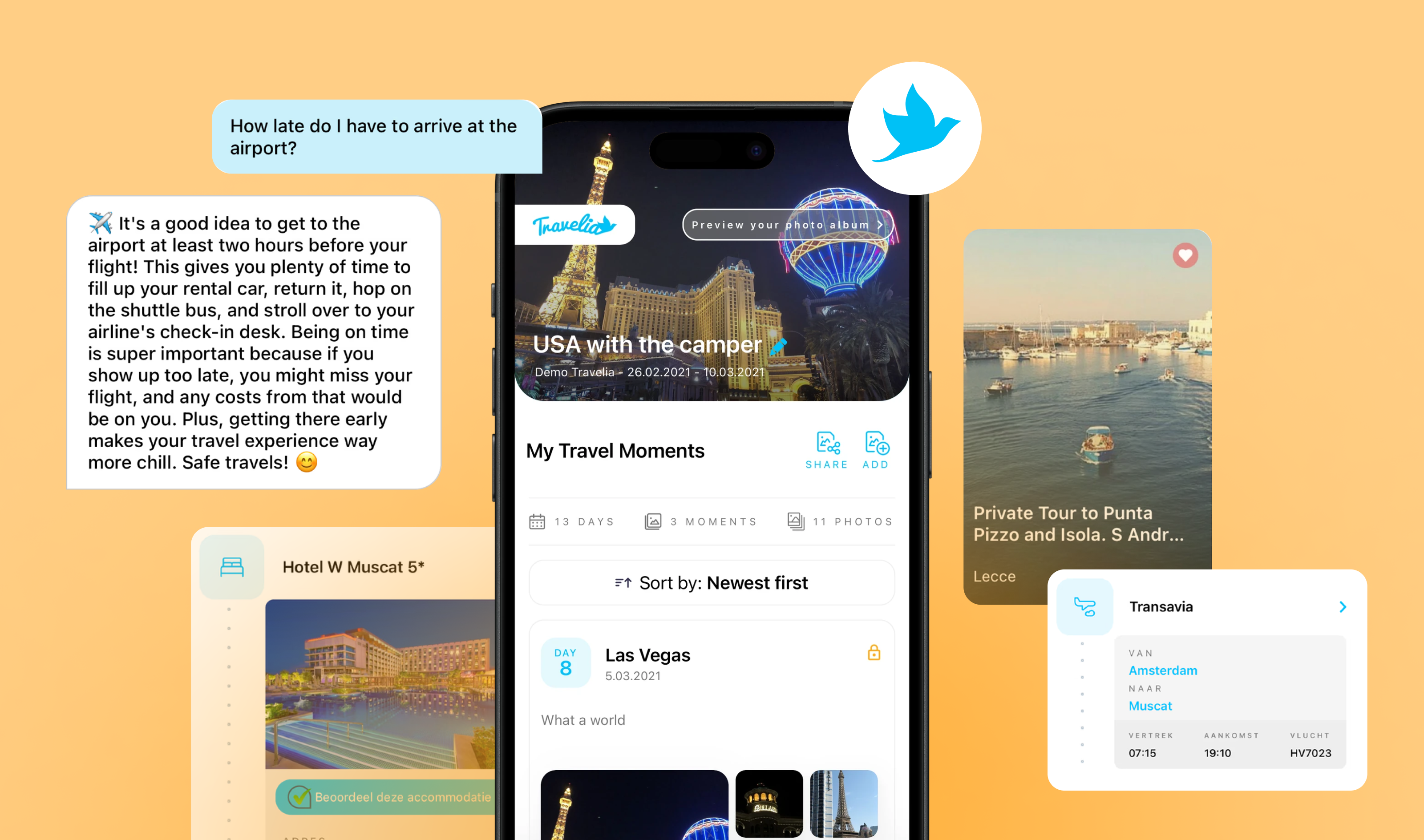

The Software House was brought in to build an AI-powered travel assistant for Travelia's mobile app, replacing slow and cost-inefficient customer support with an AI chatbot that delivers instant, personalized answers to millions of tourists.

The AI solution now handles 99.6% of all queries automatically, cutting support costs by providing instant responses to 13 million travelers.

Partnership goal:

→ To deliver an AI-powered travel assistant that provides instant, accurate answers while cutting support costs and scaling to handle millions of interactions annually.

The client

Travelia is a mobile application sold to travel agencies and tour operators, serving as a white-label platform for tourists who have purchased a trip.

The app is customized to match each agency's corporate colors and branding, operating under the agency's name to ensure a cohesive, integrated experience for both the travel agency and the end user.

Currently, Travelia holds a monopoly in the Dutch market, operating in several countries in Europe.

Core app functions

Travel timeline with documents (tickets, accommodation, reservations)

Recommendations for must-see local attractions

Collecting photos and generating a holiday photo book

To-do checklist before traveling (parking, luggage)

Contact with the tour guide or chatbot

INDUSTRY

Travel

COUNTRY

The Netherlands

SERVICE

AI assistant (chatbot)

Challenge

The challenge was to improve the travellers’ vacation experience:

Provide more accurate information,

Answer questions fast,

Increase Travelia's clients' revenue through upselling.

Without a scalable solution, Travelia risked losing competitive advantage, burning through support budgets, and failing to deliver the instant, personalized experience that modern travelers expect.

Solution

The Software House delivered the following components:

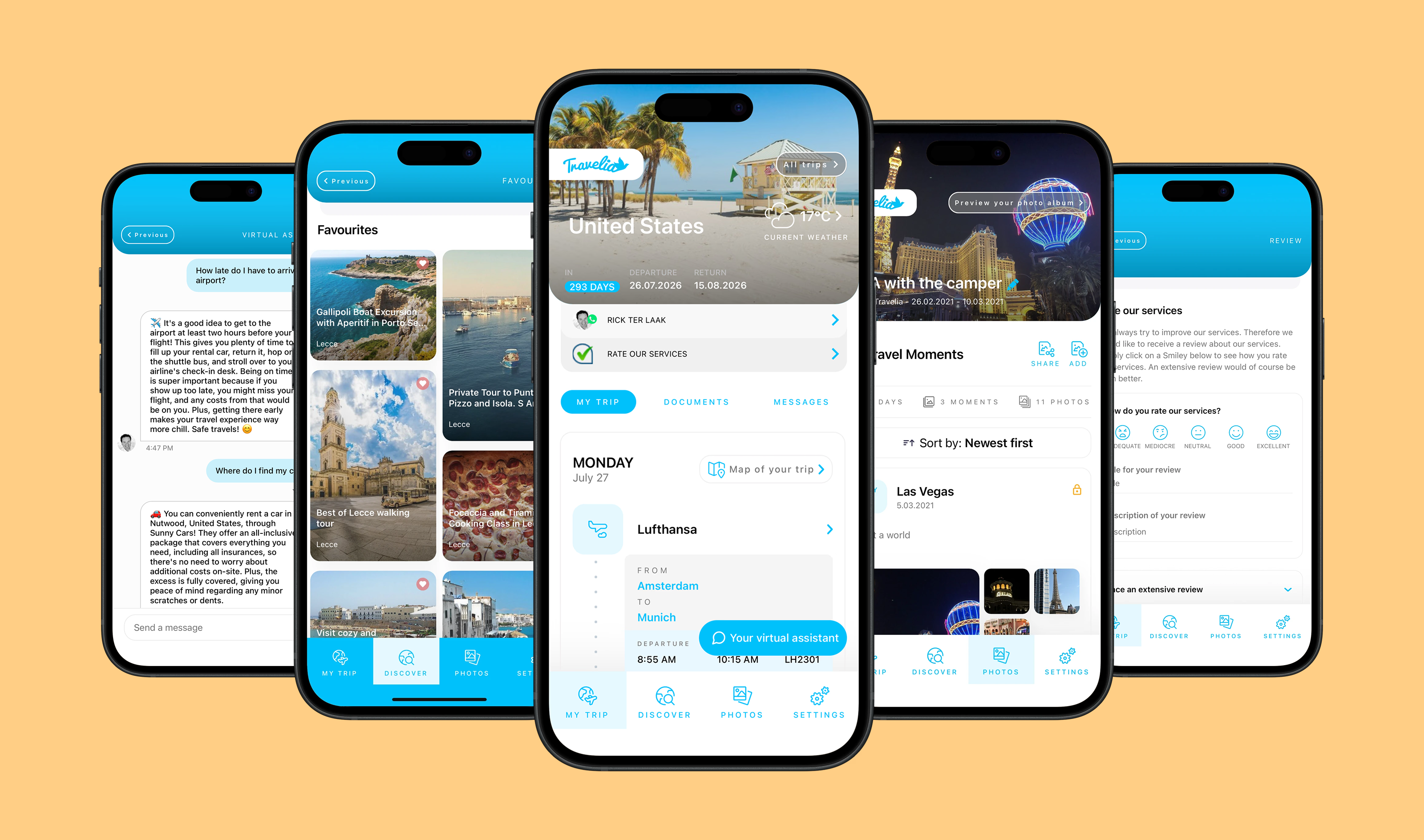

AI-powered travel assistant integrated into Travelia's iOS and Android apps with real-time answers based on booking and location data

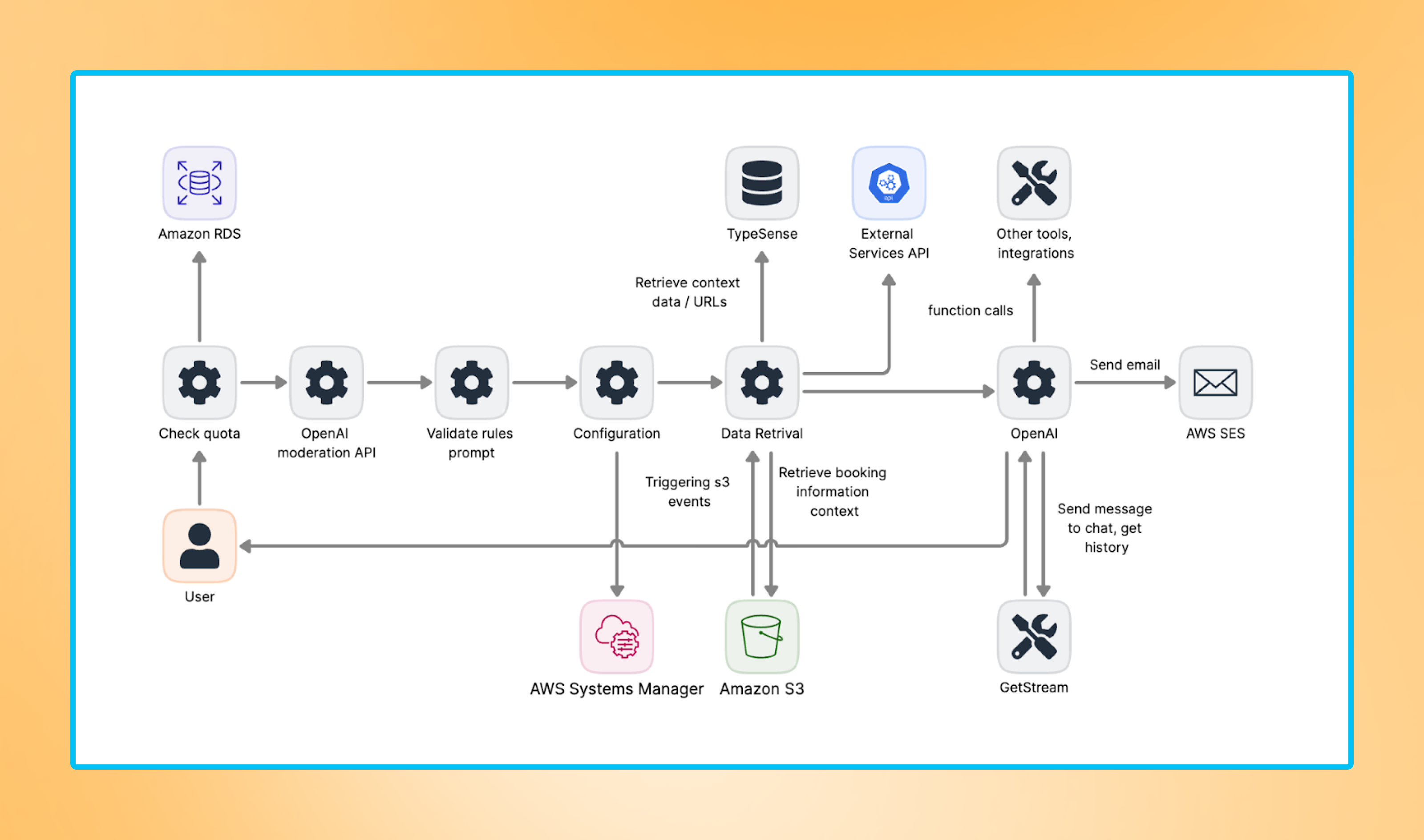

An embedding system that browses data, selects accurate information, and employs AI for reasoning

Serverless architecture using AWS Step Functions for message validation, processing, and response delivery

Message validation with rate limiting, content moderation via OpenAI's Moderation API, and context checking

Content restrictions using Guardrails to limit responses to travel-related topics only

Cost optimization, including local embedding, that reduced prompt size from 2,500 to 700 tokens

Prompt-Test library for automated prompt testing, now used across all TSH Language Model projects

User profiling system based on age groups, travel companions, and activity preferences

Checklist before departure with sponsored recommendations, like travel eSIM or parking

Automatic notifications through pinned messages, displaying check-in reminders, and sponsored links

UI modifications to Stream Chat components to better fit Travelia's needs

Process

Workshops and discovery

The Product Design team ran workshops and created Figma designs, while the Architecture team consulted AWS experts and built a proof-of-concept using Claude. Once validated, they created specs and a task backlog.

OpenAI selection

The team evaluated models based on AWS compatibility, costs, and response quality. OpenAI won for its contextual understanding, natural conversation flow, and function-calling capability.

Embedding system

An AI model didn't know hotel amenities or flight times, so the team built a system that retrieved relevant information first, then let AI reason over it.

They used TypeSense to store vectors for semantic search and pulled data from external APIs: Amadeus, Google Places, and Geonames.

Serverless architecture

The team used AWS Step Functions to break the workflow:

Message validation

Message processing

Sending a message to the user

With Step Functions, the team could rerun individual steps when GenAI returned unexpected outputs.

Hallucination prevention and safety

The team implemented multiple safeguards to ensure reliable and safe responses, including message limits per session to prevent abuse, OpenAI’s Moderation API to filter harmful content, and a smart combination of multiple LLM calls for context validation, guardrails enforcement, and hallucination prevention.

This approach blocks off-topic or unreliable answers and keeps the assistant focused on verified, relevant information.

Cost optimization

Local embeddings reduced prompt size from 2,500 to 700 tokens (a 72% reduction), significantly lowering inference costs.

Hotel data was moved to S3 instead of a traditional database to further optimize storage and access costs.

In addition, the team built Prompt-Test, an internal tool for automated prompt testing that is now used across all TSH LLM projects, improving efficiency and consistency at scale.

User profiling

The system profiled users by age group, travel companions, and activity style. The chatbot asked profiling questions naturally during conversations, rather than showing a survey at launch.

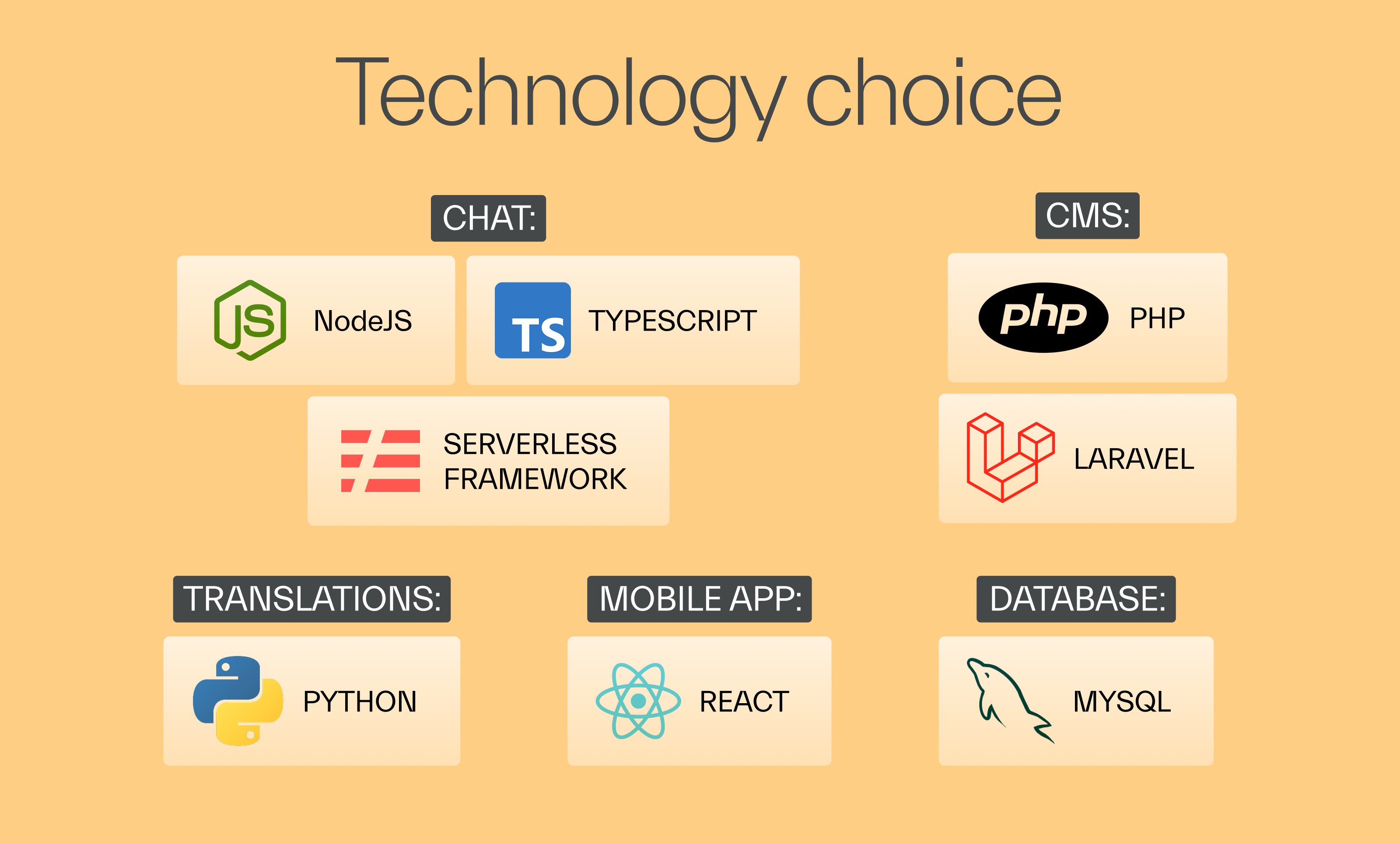

Technology stack

AWS | Integrations |

|

|

Outcome

The partnership between Travelia and The Software House resulted in a GenAI-powered travel assistant that transformed customer support.

→ 99.6% of queries handled by the AI assistant, with only 0.4% requiring human agents.

→ Instant responses delivered in seconds, replacing long wait times for 13 million travelers.

→ 72% reduction in prompt size (from 2,500 to 700 tokens) through local embedding.

→ Personalized recommendations improving traveler satisfaction and retention.

Testimonial

Their role in the project turned out to be critical.

We had a clear definition of done, which improved our communication tremendously.

Having defined a clear path, we had some fun working together, and I think that kept us motivated. They're actively trying to make everything work better.

Rick ter Laak

CEO

Read similar case studies

→ AI for travel document management cut all manual data work